Blog

The short answer

The posts that actually worked are the ones that beat a meaningful comparison group. Not the industry average. Not last year. The posts next to them, for the same client, in the same quarter, on the same platform. Without that side-by-side view, a single post's numbers are unreadable.

Agencies running 5 to 15 clients feel this harder than anyone, because the comparison work multiplies with every account. This article walks through three comparison patterns that turn 20 published posts into decisions your next client call can actually use, and shows why a side-by-side view (like ZoomSphere's Compare Results inside Bulk Actions) replaces the end-of-month spreadsheet rebuild that most agency teams quietly dread.

{{form-component}}

The real problem isn't measurement. It's the comparison gap.

Every social media manager we talk to tracks numbers: reach, impressions, saves, clicks, followers. The native dashboards hand you plenty, and most teams export some version of that into a spreadsheet at the end of the month.

That's not where things break. They break at the next step: turning rows of data into a decision.

A post got 412 impressions on LinkedIn. Is that good? You can't answer the question from the number itself. It's good if it's your highest-performing LinkedIn post that month for that client. It's bad if four of the client's other five posts beat it, and this one ate the most production time. The number alone says nothing. The comparison says everything.

That's the comparison gap. Agency teams measure post by post, client by client, then stare at the rows and feel vaguely productive, because the spreadsheet is full. But nobody stops to ask the one useful question, the one that makes the next client call worth having: compared to what?

The scale is what makes this so painful at an agency. AgencyAnalytics' client-reporting benchmarks report found that 61.8% of agencies pull data from 3 to 5 separate platforms per client, 26.4% pull from 6 to 10, and 2.8% pull from 11 or more. Agencies log into those platforms separately for every client, every reporting cycle. A portfolio of 10 clients means the same login, copy, paste, reconcile loop repeated 10 times, before anyone has made a single decision. And Sprout Social's survey of 500 marketers puts the weekly cost at 3.8 hours per week on data analysis and reporting, roughly 16 hours a month. Most of that time is measurement. Very little of it is comparison.

How to compare social media post performance across platforms

Four steps, in this order:

- Normalize the metric. Don't compare Facebook's native engagement rate to TikTok's. They aren't the same formula. Convert every post to interactions per 1,000 impressions (IPM) as your cross-platform number.

- Pick a meaningful comparison group. Not industry benchmarks. Not last year. Pick a group for this client, on this platform, from the same quarter: top 5 vs. bottom 5, campaign posts vs. evergreen, version A vs. version B.

- Line them up side by side with previews. Post images or thumbnails next to the metrics. Without the preview, you can't see hooks, formats, or visual patterns. Numbers alone won't show it.

- Ask what the winners share that the losers don't. Hook? Format? Day of week? Caption length? Opening line? The answer becomes a content hypothesis for next quarter for that client.

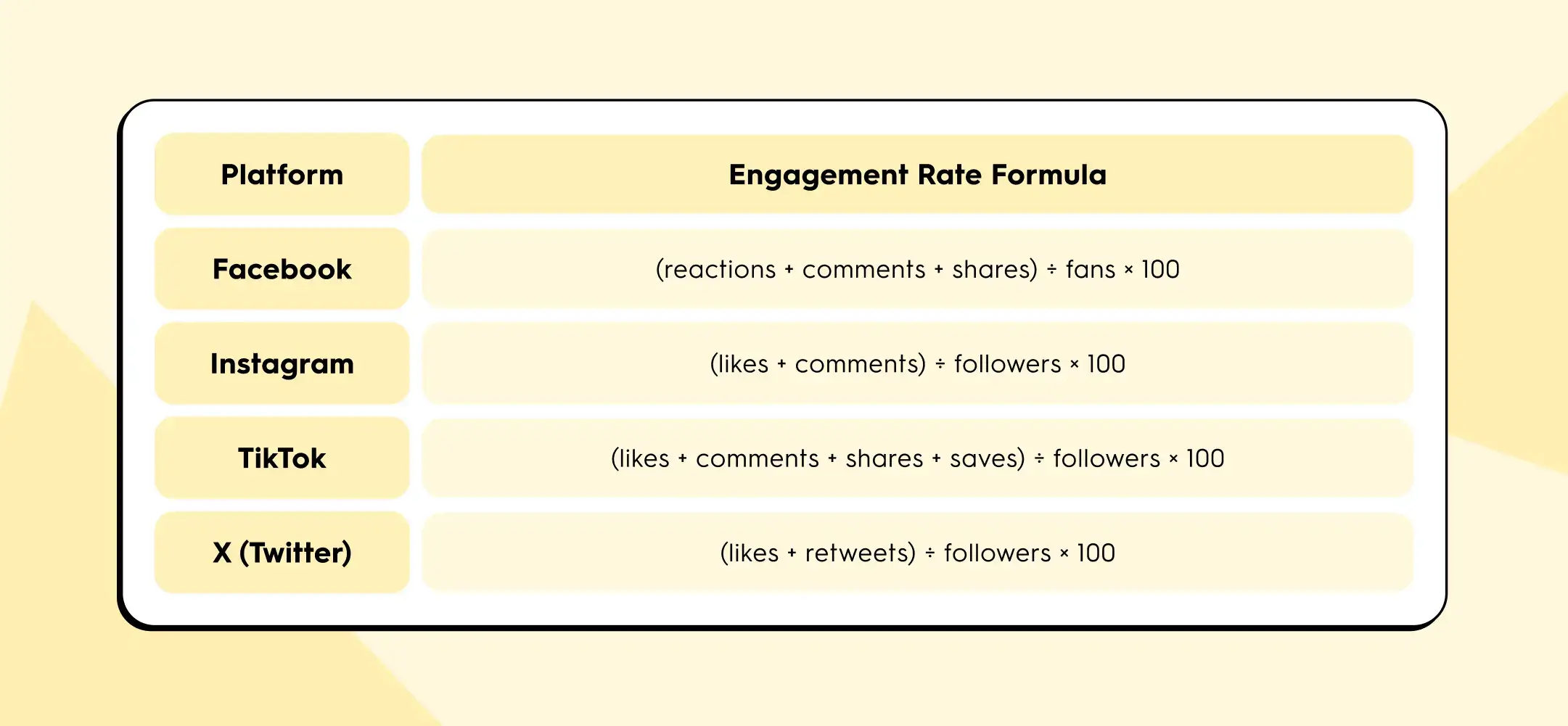

The trap in step 1 is that each platform defines engagement rate differently. According to Socialinsider's 2026 benchmarks methodology, the formulas look like this:

Buffer's State of Social Media Engagement 2026 adds another wrinkle: LinkedIn's engagement rate includes clicks, while most other platforms don't.

If you put all four numbers in the same row of your client report, you are not comparing content performance. You are comparing four different pieces of arithmetic.

IPM solves this because impressions is the one denominator every platform exposes, and interactions (any user action beyond scrolling past) can be counted consistently.

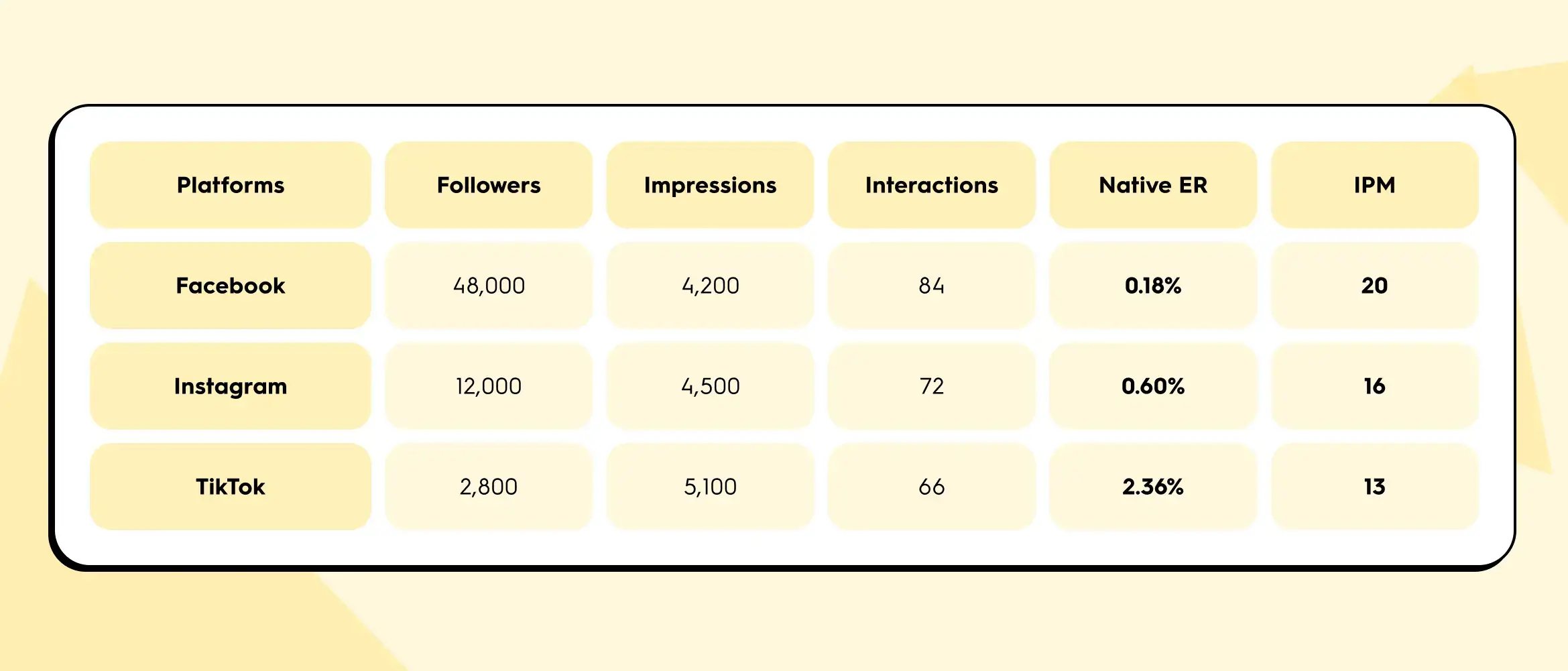

Here's a worked example. One agency client, one campaign post, cross-published to three platforms:

Native engagement rate ranks TikTok first by a mile (2.36% crushes 0.18%). But IPM ranks Facebook first. The disagreement isn't a calculation error. Native ER is inflated on TikTok because the follower denominator is small. Per 1,000 actual eyeballs on the content, Facebook drove the most interactions.

Which one matters depends on the question the client is asking. If the question is "which of our owned channels has the most loyal audience?", native ER on each platform gives you that. If the question is "which channel actually carried this post?", IPM gives you the honest answer. For cross-platform comparisons inside a client report, IPM is the safer metric. For single-platform trend lines over time, native ER is fine. A useful cross-client habit: report IPM as your standard comparison number across every account in the portfolio, then layer platform-native ER underneath for platform-specific conversations.

Three comparisons your monthly report actually needs

Instead of reporting numbers, report differences. These are the three comparison patterns that turn a wall of data into three useful client conversations.

1. Multi-platform post: which channel actually carried the content?

Your team wrote one piece, published it to Instagram, LinkedIn, and Facebook for a client. In most reports, this shows up as three separate rows with three separate sets of numbers, and nobody goes back to check whether the same content performed differently across the three channels.

That's where one of the highest-value insights in monthly reporting quietly hides. Same hook, same copy, same image. Different reach curve, different interaction mix, different click-through behaviour. The story usually isn't "one platform underperformed." The story is which platform is that client's distribution channel, which is their awareness channel, and whether their cross-posting budget is allocated accordingly.

You can't see that without a side-by-side view. And in the native dashboards, a side-by-side view of the same post across three platforms simply doesn't exist.

2. Campaign retrospective: top 5 vs. bottom 5

Pick any campaign from last quarter, from any client in the portfolio. Five posts tied to an event, a product launch, a content series. Line up the five strongest performers next to the five weakest and ask one question: what do the winners have in common that the losers don't?

Usually it comes down to one of four things:

- the hook

- the visual format

- the day of the week

- the length of the caption

You don't need an analytics degree to spot the pattern. You need the posts in front of you, in a row, so your eyes can do what spreadsheets actively prevent them from doing.

This is the fastest way to generate content hypotheses for next quarter across every client you manage. It's also the hardest to do in a standard analytics export, because the preview image is never next to the numbers. Without the preview, you are reconciling post IDs in your head.

3. A/B test: why, not just what

Your team tested two captions for the same visual. One performed better than the other. Fine. The spreadsheet tells you which one won, but it doesn't tell you why.

Seeing the captions next to each other, with the numbers underneath, is where the "why" shows up. Maybe Caption A opened with a question and Caption B opened with a statistic. The visual was identical. The delta is the opening line. You now have a reusable principle for the next 30 posts, across multiple clients in similar categories, not just a winner for this one.

The content preview next to the metrics is the thing that turns a data row into a learning.

From spreadsheet sprawl to side by side

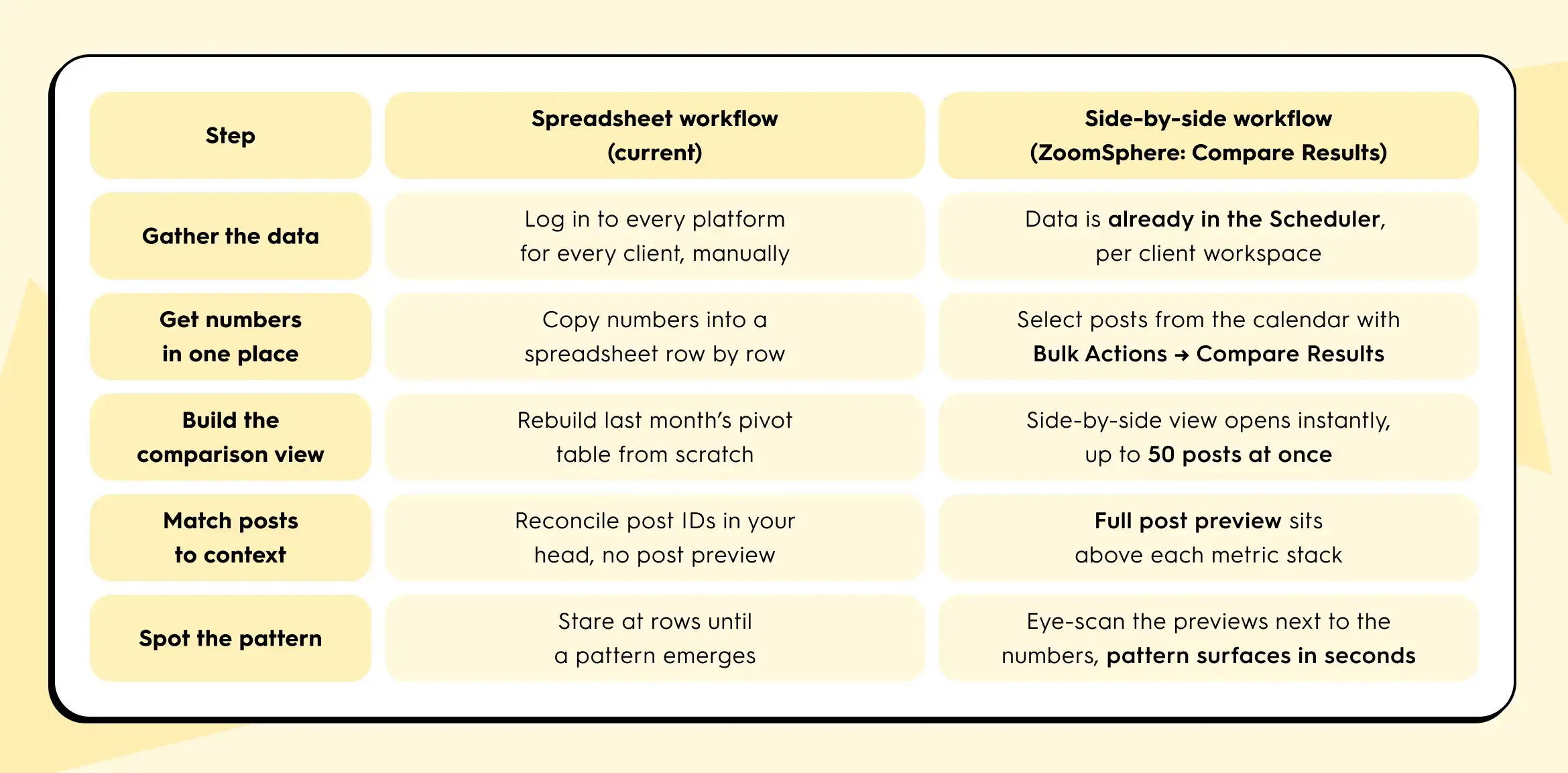

Same task, same client, two workflows. Here's what actually changes, step for step:

The side-by-side approach collapses the first five rows into roughly one view. You still write the report at the end. But the thinking part and the comparison part now happens in minutes instead of hours, per client.

The 15-minute Q1 review that actually tells you something

It's late April, which means: Q1 closed three weeks ago and Q2 content planning lands on this week's agenda, across every client in your portfolio.

You won't get 60 minutes per client, you'll only get 15. Here's a review that fits that block, scaled across the accounts that matter most first:

- Start with your top three highest-revenue or highest-visibility clients. Open the calendar view of the last 90 days for each, one at a time.

- Select the top 5 and bottom 5 performers by reach for that client. Compare them side by side. Note the pattern.

- Select every post from the biggest campaign that client ran that quarter. Compare them. Note which hooks and formats worked.

- Select every version of the one post that was cross-posted to multiple platforms for that client. Compare them. Note which channels actually pulled their weight.

Three comparisons per client. Fifteen minutes per client. Cycle through the portfolio in priority order until planning week is covered. You walk into each Q2 planning call with three specific, defensible statements about what worked and what didn't for that specific client, grounded in side-by-side evidence. That's the shift from "here are the numbers" to "here's what we're going to do differently for you."

What this looks like in your tool

In ZoomSphere, comparison is built directly into the Scheduler, not bolted on as a separate analytics module. Each client lives in its own workspace, so you move from account to account without rebuilding context. Inside a client's Scheduler, you select posts straight from the calendar using Bulk Actions, then choose Compare Results. The view opens a side-by-side table with each post's preview on top and its metrics (views, reach with organic, viral, and paid breakdowns, interactions, clicks, post saves, and platform-specific counters like Reels Plays and Replays) stacked underneath. You can compare up to 50 real posts at once.

If the same post went to multiple Facebook Pages, channels, or networks, there's a second flow: find the post, click the three-dot menu, choose Compare Results, and you'll see that single post split across all of its sources. It's the fastest way to answer the "is cross-posting actually working for this client, or is one source quietly carrying the whole thing?" question.

No export step. No pivot table. No holding the previous number in your head while you open another native dashboard in another tab.

If your current tool doesn't let you line up 5 posts, 20 posts, or a full campaign's worth of posts next to each other in under a minute, per client, that's the gap between "we measure" and "we learn from measurement." It's the same gap Sprout Social's 3.8-hours-per-week finding points at: a lot of hours spent pulling numbers, a lot fewer spent on the thinking those numbers are supposed to enable.

One thing to try this week

Before Q2 planning locks in, pick one client in your portfolio. Pull up their last 20 published posts. Pick five. Put them side by side. Ask: what do the top two have in common that the bottom three don't?

That's the question that turns a spreadsheet into a strategy. Whether you do it in ZoomSphere or anywhere else, do it once for one client this week. You'll see why we keep talking about it.

If you want to try it inside ZoomSphere, it's already sitting in your Scheduler under Bulk Actions → Compare Results.

{{cta-component}}

Quick answers for cross-platform comparison

A few questions we get from agency managers every time this topic comes up.

What is IPM (interactions per 1,000 impressions)?

IPM is a normalized engagement metric calculated as (interactions ÷ impressions) × 1,000. It expresses how many user actions a post earned per 1,000 eyeballs on the content. Because impressions is the one denominator every major platform exposes, IPM lets you compare Facebook, Instagram, TikTok, LinkedIn, and X posts directly without the definition mismatch that breaks native engagement rate.

Why is engagement rate different on each platform?

Each platform defines engagement rate with its own formula. Facebook uses fans as the denominator and counts reactions, comments, and shares. Instagram uses followers and counts only likes and comments. TikTok adds saves. LinkedIn includes clicks. When you put these side by side in a client report, you are not comparing content performance, you are comparing four different pieces of arithmetic. For an apples-to-apples view across platforms, use IPM.

How do I compare Instagram and TikTok performance for the same client?

For a single cross-posted piece of content, calculate IPM for each platform: (interactions ÷ impressions) × 1,000. Then put the two posts side by side with their previews above the metrics. TikTok will usually win on native engagement rate because its follower denominator is smaller. IPM gives you the honest per-thousand-impressions comparison. Add reach, saves, and (where relevant) shares underneath to read the full story, not just the headline number.

How many posts should I compare at once?

For pattern-spotting, 5 vs. 5 is the sweet spot for top vs. bottom performers. For campaign retrospectives, compare every post in the campaign (usually 8 to 15). For cross-platform reviews of a single piece, compare every destination (2 to 4 platforms). ZoomSphere's Compare Results view handles up to 50 real posts side by side, which covers a full quarter's worth of a campaign for one client without splitting the view.

What's the fastest way to spot a top-performing post pattern?

Line up the top 5 and bottom 5 posts from the last 90 days for one client, with previews next to the metrics. Ask what the winners share that the losers don't. The answer is almost always one of four things: the hook, the visual format, the day of the week, or the caption length. You don't need an analytics degree, you need the posts in a row so your eyes can do the work a spreadsheet prevents.

Your client doesn't think they're slow. That's the whole problem.

They responded. It might have been a thumbs-up on WhatsApp, a "looks good" buried in an email thread from last Tuesday, or a Slack message that arrived while you were in a different client's review. In their mind, the ball went back to your court immediately.

What they don't see is that their approval arrived in three different places, none of which is your scheduler. There is nothing to track, no status to check, no confirmation that anything happened. Somewhere between finding it, confirming it counts, and briefing the change, Tuesday became Thursday, and the post that was supposed to go live Wednesday morning is now officially late.

They think you're slow. You know they're unresponsive. Neither of you is wrong about what you experienced. But one of you is losing the client over it, and it isn't the client.

Q2 is when this breaks visibly. Spring client intake means more accounts, more content calendars, more approval chains running in parallel, on a process that was already showing cracks at three clients. If you have added new clients since January and your inbox feels qualitatively different, it is not a coincidence. The problem did not get worse. It got bigger.

This is the approval problem at its core: not that people don't respond fast enough, but that the process has no shared state. Neither side can see where content actually is. And when visibility is zero, the default assumption on both ends is that the delay belongs to the other person.

{{form-component}}

Why Client Content Approval Takes So Long

The explanation agencies land on first is usually the wrong one: the client is too busy, too indecisive, too disorganized. Sometimes that is true. More often, the client is not slow. They are operating without any signal that action is required right now.

When content travels by email, neither side has a complete picture. The client does not know if what you sent is a working draft or a final version ready to go live. Your team does not know whether the client has opened it, forwarded it to a colleague, or started forming opinions without telling you. When the client does respond, the feedback arrives in fragments: one comment by email, a separate thought on Slack, a verbal note from a call that nobody wrote down.

Nobody assembled these fragments into a single place. Nobody confirmed which version they apply to. Before you can act on any of it, you have to reconstruct a conversation that happened across four channels. That reconstruction is invisible work that shows up nowhere on a timesheet.

Why Email and Slack Make Approval Harder, Not Easier

Email and Slack were not designed to track decisions, they deliver messages. When approval travels through a message-delivery tool, both sides can read the same thread and reach different conclusions: you see an unanswered request, the client sees a conversation they consider finished. Neither person is misusing the tool. The tool simply has no concept of "pending," "reviewed," or "approved." A thumbs-up emoji is not an audit trail. A reply-all with three new opinions is not a decision.

Every channel you add to an approval chain multiplies this problem. The client approves on WhatsApp. A colleague adds context on email, someone else leaves a note on Slack, the feedback exists across all three. The approval exists in none of them. Reconstructing a decision from three channels is not a sign that the client is difficult. It is a sign that the process has no single place where state lives.

Here is what that costs, specifically. The client reviews a post in 90 seconds. The approval cycle around that 90 seconds costs your team an average of 35 to 45 minutes per post: composing the handoff message, following up when it goes quiet, finding the reply buried in the wrong thread, reconciling the feedback, confirming which version is actually approved. At five clients with two posts in approval each week, that is over six hours. Not on content. On tracking content.

58% of working time goes to coordination tasks rather than skilled work: status updates, searching for context, tracking who needs to do what next. Approval chasing is a concentrated version of this in agency work. The difference from most knowledge work is in what drives the growth: in most organizations, coordination overhead scales with headcount. In agencies, it scales with client count. And client count is the variable you are actively trying to increase.

What the Client Is Experiencing While You Wait

Here is the part that rarely gets named: while you are checking inboxes and composing reminder messages, your client is not experiencing a delay. They are experiencing silence.

They sent their feedback. They assume you received it. From their side, things appear to be moving forward. The gap between their assumption and your reality is invisible to them. And invisible gaps, over time, do not create frustration so much as they create doubt.

The client starts wondering whether the agency is on top of things. They do not say this out loud. They ask "where are we with next week's posts?" as a way of checking. When that question becomes a weekly habit, the relationship has already shifted. They are no longer a partner in a shared process. They are a client managing an agency they are not quite sure they trust.

Most agency relationships that end do not end over a bad post or a missed brief. They end after several months of Mondays where the client was not quite sure what was happening, and started taking calls from other agencies who seemed to have their process together.

What Approval Fatigue Actually Is

Approval fatigue is not about volume. A client who reviews ten posts a week is not necessarily more fatigued than one who reviews two. The fatigue comes from decision cost: how much work it takes to reach a yes or no.

When a client receives a post as an email attachment without version context or a clear approval request, they are forced to reconstruct the entire history before they can even begin to evaluate the content. This process requires them to find the previous thread, recall past agreements, and manually compare versions to see if their comments were addressed—all before they’ve actually looked at the post itself.

A structured workflow eliminates most of this cost before the client opens the post. The content appears in context, with status visible and the request explicit. The client's job is a decision, not an investigation. That distinction is why the same client who takes four days to respond over email can turn around an approval in two hours when the process removes the reconstruction work.

When feedback has one place to live and both sides can see it, the doubt disappears. Not because the content got better. Because the process became legible.

What a Structured Approval Flow Looks Like in Practice

The fix is not a more elaborate process. It is a visible one: every post has a defined state, and both sides can see it without sending a message to find out.

Here is how the same week looks when the process has structure. The flow uses ZoomSphere's actual workflow states.

Stage 1 is yours.

The copywriter drafts, you review internally. A quick check: copy is right, format fits the platform, nothing will make the client wince. The post sits at #Draft, invisible to the client. You are not asking for their opinion on a working draft. You are preparing finished work before it reaches them. That distinction alone changes how clients engage with content when they see it.

Stage 2 is the handoff.

When the post is ready, you change the status to #ToApprove. That is the trigger. How the client receives it depends on what you have configured and how they actually work.

The most common setup is connecting the client's email address to the #ToApprove status. That is the trigger. ZoomSphere gives you six ways to deliver the post from there, and which one you use depends on how the client works and what you have configured:

- ZoomSphere Chat: Select the posts from the calendar and send them as a direct message to your client or manager. With the ZoomSphere mobile app, the client gets a push notification and can review and approve on the go.

- Post Statuses: Changing the status to #ToApprove is itself a visible signal. Your client sees posts grouped under the approval status and knows exactly which content is waiting for their attention.

- Email notification: Connect the client's email address to the #ToApprove status. When the status changes, they receive an automatic email with a direct link to the post as it will appear, including scheduling details. No separate message from you required.

- Bulk Actions email: For larger batches, select multiple posts and ideas, click "Send to Email," and add a personal note. The client receives a single email covering everything that needs approval in one place.

- Post comments with @mentions: Tag the client directly in the post comments using @. They get notified instantly, and their feedback lands on the post rather than in a side thread. This works for internal handoffs too: loop in a teammate on a specific question without leaving the tool.

- Export as PDF or Excel: For clients who prefer a full-scope overview, export the post plan and send it as a PDF or Excel file. Best suited for monthly or weekly reviews where context matters more than speed.

For agencies with a regular publishing rhythm, methods 1, 2, and 3 are the most practical day-to-day: the client receives a direct link the moment the post is ready, with no extra effort from your side.

Stage 3 is the client's two minutes.

The client reviews the post and either approves it (which moves it to #Approved and clears it for scheduling) or leaves a comment directly on it if something needs changing.

Throughout this chain, every post has a visible status. Both your team and the client see it and nobody has to ask where something is, because the answer is always one click away.

Approval workflow is the first feature agencies configure when they set up ZoomSphere. Before the scheduler, before analytics. It is also the topic that generates the most questions in our support conversations during the first two weeks of a new account. Not "how do I schedule a post" or "where is my analytics." How do I get my client to approve faster. The answer is always the same: structure the handoff so the client knows exactly what they are looking at and exactly what they are being asked to do.

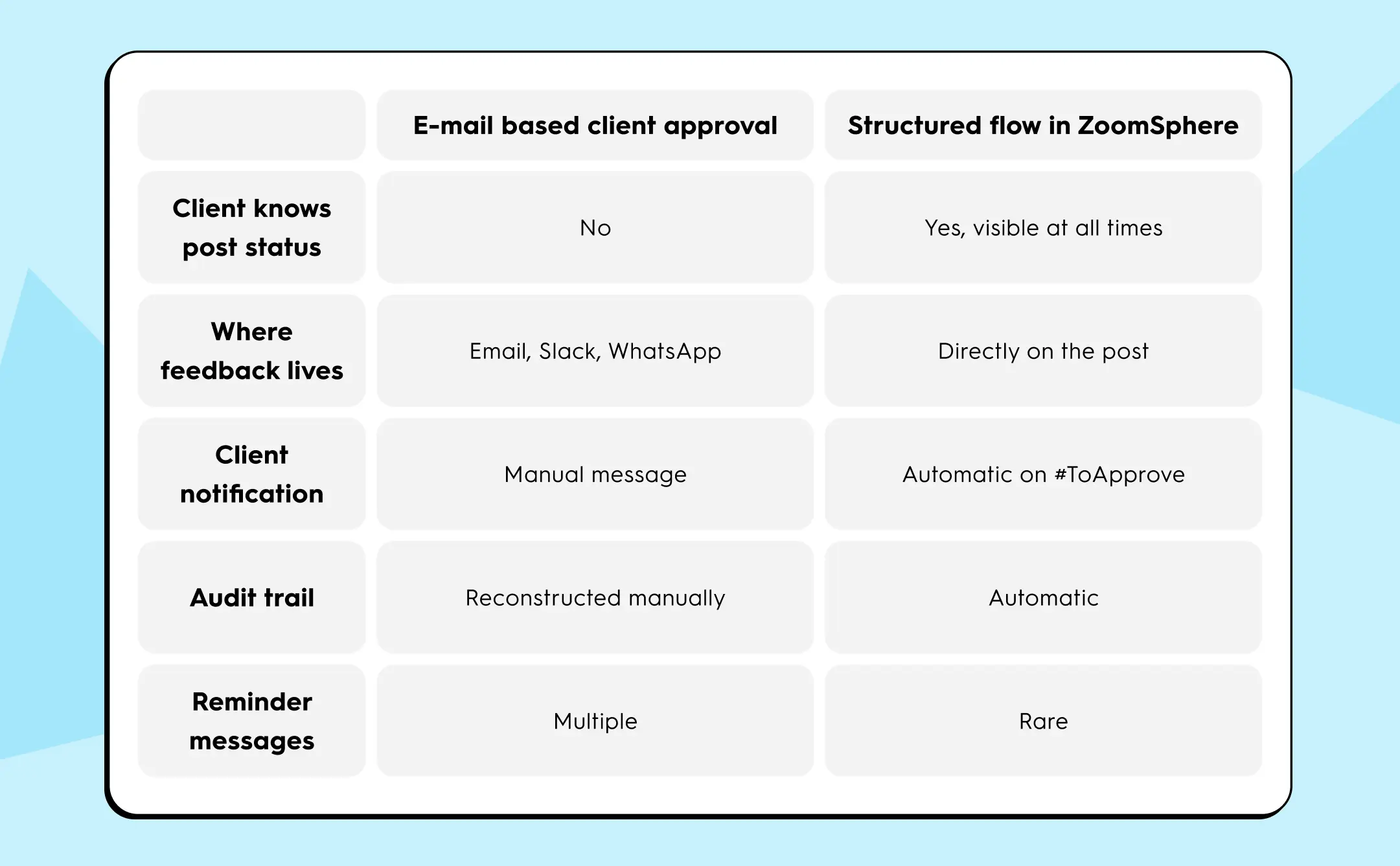

Before and After: The Same Process, Made Visible

Before you read the table, take thirty seconds with your own process. Think about your last client week. Count how many approval conversations happened outside your content tool: email threads, WhatsApp replies, Slack messages in the wrong channel. Count how many posts needed a follow-up nudge before you heard back. That number is your baseline. It is what the right column of this table eliminates.

The last row is the one that changes how the relationship feels day to day. When a client no longer needs to ask where things stand, they stop experiencing your agency as something they have to manage. That shift is not cosmetic. It is the difference between a client who renews and one who quietly starts taking other calls.

How to Set Up Client Approval Rules Before Your Next Post

You do not need a new process document. You need two things: one named approver on the client side, and an agreed response window before content starts moving.

Start with one client. Ask them who gives final sign-off: one person, not "the team." Agree on 48 hours as the baseline for feedback. Name it in your onboarding conversation, not in the contract. Clients respect norms they agreed to in a conversation far more than clauses they skimmed in a PDF.

Then configure the status flow in ZoomSphere: set #ToApprove to automatically notify that person when content reaches the approval stage. From that point, the reminder emails stop because the system sends the notification. Your team has a record of what was approved, who approved it, and when.

When both sides can see the same status on the same post, the ambiguity that was generating all the overhead has nowhere to live.

{{form-component}}

How to Set Client Expectations on Approval Turnaround

The most effective time to set a turnaround expectation is before it is needed. Introduce the 48-hour window during client onboarding, not after the first missed deadline. Frame it as a mutual commitment:

- your team delivers review-ready posts on a predictable schedule

- the client commits to a response window

Both sides have a visible obligation before the first post moves.

Three factors determine whether the agreement holds in practice. First, decision authority needs a name. "The marketing team will review it" is not an approver. One person, reachable through the channel you have agreed on, with the authority to say yes. Second, the window needs to fit how the client actually works. Forty-eight hours is a workable default; if the client travels frequently or has irregular working patterns, negotiate 72 hours upfront rather than chasing them repeatedly on a timeline that was never realistic for them. Third, let the tool carry the reminder. When ZoomSphere sends the client a direct link the moment the post changes status, the notification is not coming from you. It is coming from the process. That changes how the client experiences the request: it is a system prompt, not a person following up.

Clients who understand what is being asked of them and receive a clear, timely prompt to act on it do not need to be chased.

Wrap Up

Approval chaos does not live in your clients. It lives in the process. In the email threads that accumulate replies nobody can find, in the Slack messages that served as feedback but not as records, in the Monday morning question ("Where are we with those posts?") your client has started asking every week.

The gap between "client gave feedback" and "approval is recorded" has always existed. What makes it expensive now is scale. At three clients, you can manage it manually. At five, chasing approvals becomes your job. At seven, it starts costing you clients. Not because of a bad post or a missed brief. Because of several months of Mondays where the client was not quite sure what was happening.

Name one approver per client. Agree on 48 hours. Configure the status flow so the client gets a direct link to the post the moment it is ready, not a forwarded email with an attachment they will have to hunt down. Do this once, for one client. See what changes by Friday.

Your approval process is either visible to the client or it is not. One of those builds trust. The other quietly erodes it.

{{cta-component}}

Here's a thought experiment. Open your social media scheduler, ask the AI to write a caption for your latest post, and read it back out loud.

Does it sound like you?

Or does it sound like it could have been written for any brand, in any industry, on any platform, at any point in the last three years.

If you paused before answering, you're not alone. Somewhere between the promise of AI-powered content and the reality of actually hitting publish, something gets lost. The voice. The personality. The tiny details that make a brand feel like it was made by actual humans with actual opinions, rather than assembled from the same template everyone else is using.

The frustrating part is that this isn't an AI problem. AI is genuinely capable of writing content that sounds like your brand. It just doesn't know what your brand sounds like unless you tell it. Specifically.

What Is Brand Voice, and Why Does It Matter for Social Media?

Brand voice is your brand's consistent tone, communication style, and personality. The way your brand sounds across every piece of content, regardless of platform or format.

It's the difference between a brand that feels distinctive and one that blends into the feed. It's why you can read a caption with no logo and still know which brand wrote it. And it's one of the few things in marketing that genuinely compounds. A brand that sounds like itself consistently over months and years builds recognition that has real commercial value.

For social media specifically, brand voice matters more than in almost any other channel. Social moves fast. Your audience sees your content in a scroll, alongside dozens of other brands competing for the same half-second of attention. Either the tone feels familiar and right, and they stop, or it doesn't, and they just keep going.

Social is also high-volume. What does it mean? Means you're not publishing one piece of content a week. Instead you're publishing multiple times a week, across multiple platforms, often with multiple people involved in creating it. Maintaining a consistent voice at that volume, without systems to support it, is genuinely hard.

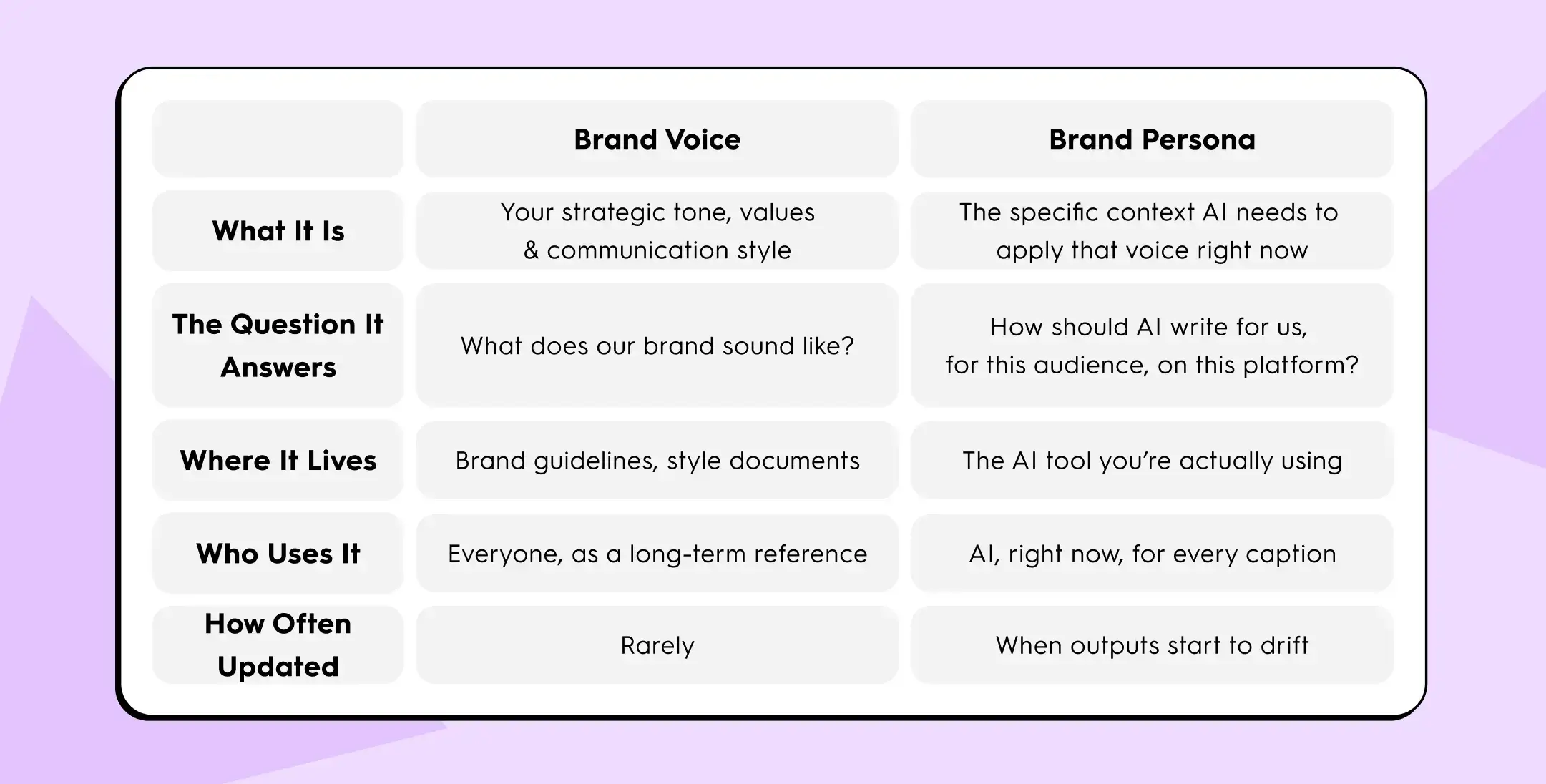

What Is a Brand Persona? And Is It the Same as Brand Voice?

- Brand voice is your what. The strategic layer. The consistent tone and values that make your brand recognizable. It's described in adjectives and principles: direct, warm, irreverent, human. It lives in your brand guidelines and is relatively stable.

- Brand persona is your who and for whom. The operational layer. The specific context that tells AI how to apply that voice in a given moment. Who's reading this? What register is right? What does your audience already know? What do you absolutely never say?

Here's a way to think about the difference: brand voice is what you'd say at a brand strategy workshop. Brand persona is what you'd put in a brief before asking a contractor to write a week of captions.

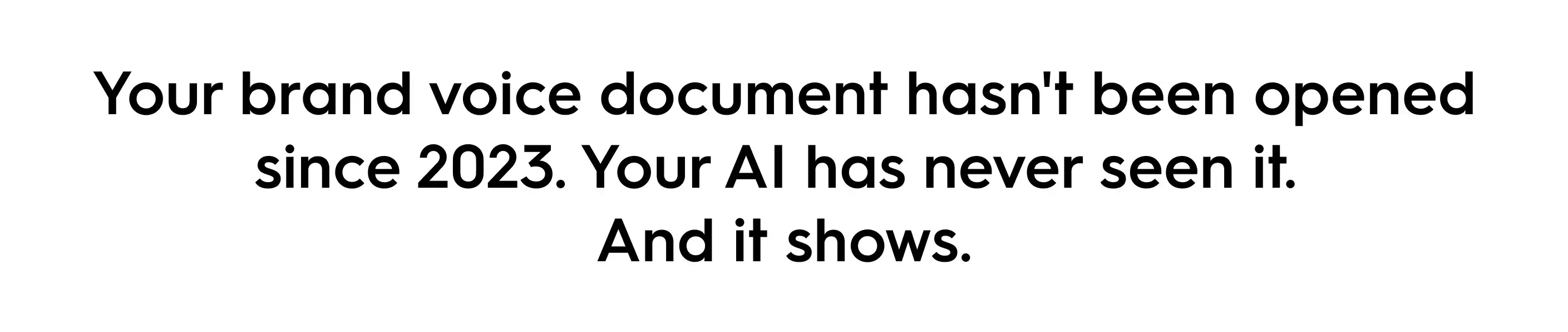

Most brands have invested real effort in the first. They have tone-of-voice sections in their guidelines, adjective lists, do-and-don't examples. Those documents are genuinely useful for onboarding human writers who will absorb them over time.

But AI hasn't absorbed anything. It doesn't remember your brand from session to session. It doesn't know your guidelines exist. Every time you open a new AI session and type "write a caption," you're starting from zero. The AI defaults to the statistical average of everything it was ever trained on. That average sounds polished, upbeat, slightly vague, and ends with a question or a CTA. It sounds, in other words, exactly like the generic captions you've been trying to avoid.

Why Does AI Write Generic Captions?

AI language models are trained on enormous amounts of text from the internet. The "average" social media caption across all that training data is professionally neutral: positive, brand-safe, not too specific, broadly applicable to any company. Something like: "Exciting news! We're thrilled to share [product] is now available. Have you tried it yet? 👇"

No brand actually talks like that. And yet without additional context, that's what you get.

When you give AI a detailed prompt, you're pulling it away from that generic center toward something more specific. The more specific your input, the more distinctive the output, and vice versa.

The problem is that most people are inconsistent about how much context they give. On Monday morning when you have time, you write a proper brief. On Thursday afternoon with 14 posts due, you type "caption about our new feature" and accept whatever comes back. By the end of the week, your feed sounds like it has three different personalities.

This isn't a discipline problem, it's a systems problem. You shouldn't have to manually re-brief the AI on your brand voice every single session. That information should already be there.

The Real Cost of Inconsistent Brand Voice on Social Media

It's easy to treat this as a minor quality issue. Some captions are great, some are a bit off, overall fine. But inconsistent voice has compounding downstream effects.

Audience recognition erodes. Brand voice is one of the primary signals audiences use to recognize a brand without seeing the logo. A feed that sounds different every week gives the audience nothing to latch onto.

Trust takes a quiet hit. When content sounds generic or slightly off-brand, audiences feel it even if they can't articulate it. Over time, that low-grade sense of "something's off" erodes the relationship between brand and audience.

AI discoverability suffers. Answer engines, the AI layers people are increasingly using to discover products and get recommendations, favor content that is specific, consistent, and authoritative. Generic content blends into noise. It doesn't get cited, doesn't get referenced, and doesn't build the kind of recognizable voice that AI systems learn to associate with expertise in a category.

Team knowledge becomes fragile. When brand voice lives in individuals rather than systems, it walks out the door with every team change. Onboarding resets it. Turnover erases it.

What Does a Strong Brand Persona Actually Contain?

A brand persona written for AI use is different from a brand guidelines document written for human writers. Humans absorb nuance over time, but AI needs explicit, operational instructions it can apply right now.

Here's what actually moves the needle:

1. Tone in behavioral language, not adjectives

❌ "Friendly and professional" is not useful to an AI.

✅ "Write like a knowledgeable friend who gives you the straight answer without the preamble, warm but direct, no filler, no corporate speak" is.

The test: could a contractor follow this brief without a follow-up question?

2. An audience description with mindset, not demographics

❌ "B2B marketers aged 25-45."

✅ "Social media managers who are experienced enough to be skeptical of trends, short on time, and will immediately clock anything that sounds written to impress rather than to be useful."

3. An explicit exclusion list

This is the most underrated input in any AI brief. Telling the AI what you never say is often more useful than telling it what you do say, because exclusions prevent the default behaviors that make content feel generic.

✅ "Never use: game-changer, synergy, unlock your potential, thrilled to announce. Never start with 'Are you ready to?' No more than one emoji per caption."

4. Platform-specific notes

Not a separate persona per channel, just brief adjustments at the end. Two or three sentences per platform is enough.

✅ "LinkedIn: analytical, longer, ends with a question. Instagram: punchy, short sentences, emojis at the end only. Facebook: warm, practical, tip-style framing."

5. Your brand's actual point of view

Not your mission statement. Your real take. This is what produces content with an actual perspective instead of content that just describes features.

✅ "We think most marketing advice is noise. We'd rather say one true thing than ten useful-sounding things."

A complete brand persona for AI use is typically 200-400 words. Short enough that the AI can hold it in context, specific enough to meaningfully change the output.

What's the Difference Between a Brand Persona and a Prompt?

A prompt is a one-time instruction. A persona is a saved context that applies automatically to everything.

If brand voice lives in prompts, it's only as consistent as whoever wrote the most recent prompt. Three people writing prompts on three different days will brief the AI differently. The output reflects those differences. The feed accumulates small inconsistencies that, over time, add up to a brand that sounds a bit like itself but never quite commits.

If brand voice lives in a saved persona, one that's applied automatically every time someone opens the scheduler and hits "generate," consistency becomes structural rather than personal. It doesn't depend on who's on the account today or how much time they had to write a brief.

For agencies this is especially valuable. Each client workspace can have its own persona. The conservative financial services client doesn't accidentally start sounding like the edgy DTC startup client. The boundaries are built into the tool, not maintained by remembering which tab you're in.

How Does ZoomSphere Handle Brand Persona?

ZoomSphere's AI copywriter includes a Persona field built directly into the Scheduler. You write your brand persona once — tone, audience, what to say, what to avoid, platform context — and it's saved to your workspace automatically.

Every caption generated from that workspace uses that persona as its context. You're not re-briefing the AI at the start of every session. You're not hoping the person covering this week remembered to mention the lowercase thing. You set it once, and it applies consistently from that point forward.

The persona is saved per workspace, which means:

- Each client gets their own persona (for agencies)

- Multiple team members generate content with the same voice baseline

- New team members produce on-brand content from day one, without a lengthy onboarding process

- The brand voice doesn't walk out the door when someone leaves

It's worth being direct about what this solves and what it doesn't. A saved persona doesn't replace strategy or creative judgment. Content still needs thought, and the best captions still come from people who understand the brand deeply. What the persona does is raise the baseline quality of AI output so you're editing instead of rewriting, across the conditions that tend to produce the most off-brand content: busy weeks, team changes, Friday afternoons.

{{cta-component}}

Does Brand Persona Work the Same Way Across Platforms?

Not quite. The core persona stays consistent: the brand's fundamental tone, values, and exclusion list are the same whether you're writing for LinkedIn or Instagram. What changes is how that voice expresses itself on each platform.

- LinkedIn rewards substance: longer captions, analytical framing, a strong point of view.

- Instagram rewards brevity: short sentences, punchy opening lines, visual-led framing.

- Facebook sits somewhere in between, with a warmer, more practical register.

- TikTok and Reels captions are often secondary to the video itself, used to add context or a hook rather than carry the full message.

💡 The practical implication: include brief platform notes in your persona. Not a separate persona per platform, just a short addendum that covers the key adjustments. Three sentences per platform is usually enough.

What Happens to Consistency Without a Saved Persona?

Social media consistency, without structural systems, depends entirely on the discipline of every individual creating content, the quality of every manual prompt they write, and institutional memory that lives in people rather than tools.

In practice, that produces a predictable pattern. On a good week, same person, focused, enough time, the content is consistent and on-brand. On a normal week, it's mostly fine with a few captions that drift in tone as the week gets busier. On a bad week, multiple people, late approvals, 14 posts due Thursday, the feed sounds like it has three different personalities and nobody had time to catch it before publishing.

During team changes, holiday cover, or onboarding, consistency basically resets to zero.

A saved persona raises the floor significantly. The bad-week output becomes closer to the normal-week output. The holiday cover doesn't produce content that sounds nothing like the brand. And the new hire's first week of captions doesn't require a complete redo.

Brand Voice in 2026: Why This Matters More Than It Used To

A few things have changed that make this conversation more urgent.

- AI content volume has increased dramatically. More content is being produced faster, with more AI involvement at every stage. The brands that sound distinctive are the ones that have built systems to maintain that distinctiveness at scale.

- AI-mediated discovery is real and growing. People increasingly find products through AI assistants and answer engines rather than traditional search. Those systems favor content that is specific, consistent, and authoritative. Generic content doesn't get cited, surfaced, or recommended.

- Audiences are getting better at spotting AI-speak. The patterns of undirected AI output, the corporate positivity, the vague enthusiasm, the reflexive questions, are becoming recognizable. When audiences clock it, it reads as lazy. The brands that maintain a genuine, specific voice stand out more than they ever did precisely because so much content has drifted toward generic.

{{form-component}}

Wrap Up!

Brand voice doesn't live in a Google Doc that hasn't been opened since last year. It lives in the outputs. In the captions that actually get published, in the content that accumulates into a recognizable identity over months and years.

The gap between "brand voice document" and "brand voice in practice" has always existed. What's changed is that AI has made the volume problem solvable. You can produce more content than ever with less effort. But it's only actually useful if the content sounds like you.

Write the persona. Save it somewhere that applies it automatically. Stop re-briefing the AI on your brand voice every single session, hoping whoever's on the account today remembers all the details.

Your brand voice is either a system or an afterthought. One of those scales, the other doesn't.

The TikTok Next 2026 report — titled "Irreplaceable Instinct" — makes one thing painfully clear: the era of passive, dopamine-fueled content consumption is wrapping up. And what's replacing it is something far more interesting for brands that actually have something to say.

We broke this report down in our recent LinkedIn carousel, but a seven-slide format can only scratch the surface. So here's the full deep dive — with extra context, real data, and a few opinions you didn't ask for but probably need.

Let's get into it.

The Big Theme: Irreplaceable Instinct

Before we unpack the three trend signals, it's worth understanding the overarching thesis. TikTok is calling 2026 the year of "Irreplaceable Instinct" — which sounds a bit like a perfume name, but the idea behind it is solid.

Here's the gist: consumers are becoming hyper-aware of how they spend their time online. The average TikTok user now spends 1 hour and 37 minutes per day on the platform — that's roughly 48.5 hours per month. But increasingly, people want that time to count for something.

The "little treat" mindset — the casual, impulsive scroll that defined the last few years — is giving way to something more deliberate. People aren't just consuming content anymore. They're evaluating it. They're asking: did that actually add anything to my day?

This shift is backed by data from WPP Media's Goat Agency, which calls it "intentional content consumption" — people wanting to control the scroll rather than being controlled by it. They're not anti-social media. They just want to feel more in charge of what they're feeding their brain.

For brands, this is both a challenge and an enormous opportunity. The bar for attention isn't just "entertaining." It's "worth remembering." And TikTok has organized this shift into three trend signals.

Trend Signal 1: Reali-TEA — Fantasy Is Fading, and Honesty Is the New Flex

Remember #delulu? That charmingly unhinged trend where everyone pretended their crush was already their soulmate and their side hustle was already a six-figure business? Yeah, TikTok says we're done with that.

The first trend signal — "Reali-TEA" (a portmanteau of "reality" and "tea," as in the gossip) — captures a collective hunger for authenticity. After years of romanticized feeds and digital escapism, audiences are gravitating toward content that feels grounded, honest, and real.

What's fading out

TikTok flags three hashtags as "What's Done":

#delulu — Once empowering, now it reads as avoidant. People are shifting from escapism to clarity.

#romanticizing — Artfully curated content is starting to feel overly polished. Audiences want the messy reality.

#digitalescapism — Fantasy feeds are being replaced by grounding, useful content that helps people feel present, not distracted.

What's coming in

Four behavioral shifts are driving this:

Forced to lock in. Audiences feel the pressure to "get serious" and they're turning to their communities for affirmation. Shared hacks, collective humor, naming feelings out loud — it's all about normalizing the struggle together.

Culture for me. Identity is no longer tied to one box. People express themselves through layered interests and niche communities — curating a personal culture that feels tailor-made.

Second account found. Brands and creators are breaking free from their expected molds and showing different sides of their personality. Think: your favorite skincare brand suddenly posting memes about existential dread.

Comment react stack. TikTok's comment photo reacts are creating an entirely new visual language. Audiences are stacking reactions, reviving memes, and turning the comment section into its own content channel.

Case study: Oreo's cookie chaos

Oreo nailed this trend by transforming its TikTok channel from a sterile recipe hub into what the report calls a "playful clubhouse." Fans jump on trends, riff on comment culture, and speculate on cross-brand "romances." It's spontaneous, it's fun, and it doesn't feel like marketing.

The result? +12% increase in shares of Oreo channel content in 2025. And in a world where shares are the top-tier algorithm signal on TikTok (outranking likes and even comments for content distribution), that's not a vanity metric — that's compound growth.

What this means for your strategy

Stop polishing everything to death. Show the behind-the-scenes. Show the real process. If your team is scrambling to meet a deadline — that's content. If your product has a quirk — own it. The brands that win in 2026 aren't the ones with the slickest productions. They're the ones that feel human.

And here's a practical move: use tools like TikTok One Insights Spotlight and TikTok Market Scope to track audience sentiment in real time. Listen first, then create content that reflects what your audience is actually feeling — not what you wish they were feeling.

Trend Signal 2: Curiosity Detours — The Algorithm Rewards Discovery, Not Just Consumption

Here's a stat that still surprises people: 49% of U.S. consumers have now used TikTok as a search engine, up from 41% in 2024. Among Gen Z, that number jumps to 65%.

But here's the nuance most marketers miss: despite higher usage, Gen Z's preference for TikTok over Google actually dropped 50% — from 8% in 2024 to just 4% in 2026. They're not replacing Google. They're supplementing it. TikTok is where they go for recipes, beauty tips, local recommendations, and rabbit holes they didn't know they needed.

That last part is what TikTok's second trend signal — "Curiosity Detours" — is all about.

The death of passive scrolling

Three more "What's Done" hashtags tell the story:

#autopilot — Going through the motions without intention? That's fading. People want to feel present.

#endlessscroll — Audiences are hopping out of their For You feeds to explore comments and the search bar. The journey matters.

#npcmode — "Non-player character" energy is out. Main character energy is back.

How it works in practice

On TikTok, the consumer journey is anything but linear. Someone searching for "running shoes" might end up watching a video about barefoot hiking, which leads them to a creator talking about trail nutrition, which somehow deposits them in a community of ultra-marathon runners in their 50s.

TikTok calls these "curiosity detours" — and they represent unexpected entry points where brands that show up thoughtfully can earn real, meaningful attention.

The report recommends a three-step approach using TikTok Market Scope:

Step 1: Analyze top searches in your vertical to understand what consumers are most curious about.

Step 2: Identify the leading topic — the one with the strongest and growing interest.

Step 3: Expand the lens. Look at related search terms to uncover the broader motivations and discovery journey behind that topic.

Case study: Duracell's K-Pop surprise

This is easily the most unexpected case study in the report. Duracell — a battery company — traced TikTok search journeys and discovered an entirely unexpected connection with the K-Pop community. Why? Because fans rely on Duracell batteries to power their glowing lightsticks at concerts.

What started as a niche discovery became a full-blown growth audience. The result: +483% growth in followers. A battery brand. Growing nearly 5x. Because of K-Pop lightsticks.

If that doesn't convince you that curiosity detours are real, nothing will.

What this means for your strategy

Stop only targeting the obvious keywords. Your next growth audience might be hiding in a community you've never considered. Use TikTok's Content Suite to dig into how people actually talk about your brand (not how you think they talk about it). Look for the adjacent spaces, the niche communities, the cultural moments that naturally align with your brand identity.

The brands that thrive won't be the ones screaming "buy our product" in the feed. They'll be the ones who pop up at just the right moment during someone's curiosity journey — and add genuine value.

Trend Signal 3: Emotional ROI — Why "Viral" Doesn't Mean "Valuable" Anymore

The third trend signal is the one with the most direct implications for anyone selling anything. TikTok is calling it "Emotional ROI" — and it's essentially a death sentence for impulse-driven, hype-first marketing.

The shift from impulse to intention

Three more "What's Done" hashtags:

#viralbuy — Viral fame isn't enough to win carts anymore. Consumers are choosing substance over stunt products.

#influencers — The polished influencer aesthetic is losing its grip. Creators are being valued for honesty, craft, and community — not follower count.

#justbecause — People aren't buying on a whim. Every purchase has to earn its place by delivering value, meaning, or genuine joy.

The new "why to buy"

TikTok identifies three key shifts in shopping behavior:

1. Expanding essentials. Consumers are broadening their definition of "essential" — not by price, but by meaning and belonging. Something qualifies as essential when it supports who they are or who they're becoming.

2. Evidence economy. TikTok is becoming a verification hub. Before committing to a purchase, people scroll through the comment section looking for honest, unfiltered community reviews. The comments are the social proof. This is huge — it means your brand's reputation is partly being written by strangers in a comment thread.

3. Tastemakers over influencers. Audiences look to creators for genuine, candid guidance. Those who embrace honesty are gaining the most influence. The difference between a "tastemaker" and an "influencer" in 2026? The tastemaker tells you when something isn't worth buying.

Case study: Audible's five-star moment

Audible cracked the Emotional ROI code by doing something beautifully simple: they asked their TikTok audience to share their five-star book recommendations.

That's it. No elaborate campaign. No celebrity partnerships. Just a genuine question directed at #BookTok — and the community flooded the comments with passionate picks. Audible went from being "the authority on audiobooks" to being "a brand that's part of the conversation."

The result: +376% higher reach than the channel average. All because they handed the mic to their community.

What this means for your strategy

Show how your brand delivers real, everyday value. Not in a "here are our features" way — in a "here's why this actually matters to your life" way. Whether that's through cost-per-wear, emotional payoff, or community connection, the "why to buy" has to be obvious and honest.

Lean into the evidence economy. Encourage community reviews. Let the comment section become your social proof. And partner with creators who are genuinely connected to your space — not the ones with the most followers, but the ones whose audience actually trusts them.

The Metric That Matters Most in 2026: Save Rate

If there's one actionable takeaway from all three trend signals, it's this: saves are the new currency.

In a world where Emotional ROI matters more than viral reach, where curiosity drives deeper engagement, and where authenticity earns trust — the "save" action is the ultimate endorsement. It tells the algorithm: this content has lasting value. I want to come back to it.

According to Sprout Social's 2026 algorithm breakdown, saves and shares now outweigh simple likes as ranking signals. TikTok's algorithm increasingly favors fewer but deeper engagements — content that travels through social graphs and into private spaces like DMs and group chats.

This isn't just a TikTok thing, either. The same pattern is emerging across Instagram, YouTube Shorts, and LinkedIn. Platforms are all moving toward rewarding content that people deliberately choose to keep — not just content that makes them pause for a second.

For your content strategy, this means optimizing for value retention, not just attention. Ask yourself: Would someone save this to revisit later? If the answer is "probably not," it's entertainment, not strategy.

Five Takeaways for Your 2026 Content Strategy

Let's distill all of this into moves you can actually make:

1. Kill the perfection reflex. The brands winning on TikTok in 2026 are showing unfiltered process, honest takes, and personality. If every piece of content goes through four rounds of approval and a legal review before it can breathe, you're already behind. Show the messy middle.

2. Map your curiosity detours. Use TikTok Market Scope to find out what your audience is actually searching for — then look at the adjacent searches. Where does curiosity lead them after they find you? Show up in those unexpected places with genuine value.

3. Build for saves, not just views. Create content people want to come back to: frameworks, checklists, how-tos, honest reviews, comparison breakdowns. The save button is the most honest feedback loop you have.

4. Let your community co-create. Audible asked for book recs. Oreo let fans speculate on cross-brand romances. The pattern is clear: the best brand content in 2026 isn't created by the brand. It's sparked by the brand and completed by the community.

5. Track the right signals. Stop obsessing over reach and impressions. Start tracking save rate, share rate, comment depth, and follower quality. These are the metrics that predict long-term brand equity — not just a viral flash.

What About the TikTok Ban Situation?

Look, we can't write about TikTok strategy in 2026 without acknowledging the elephant in the room. The platform has faced ongoing regulatory scrutiny, trust among Gen Z users has declined (74% now think twice about who they engage with, and 60% report trusting TikTok less), and the geopolitical drama hasn't exactly calmed down.

But here's the thing: whether TikTok remains in its current form or evolves, the behavioral shifts described in this report aren't platform-specific. The move toward intentional consumption, authenticity, and emotional ROI is happening everywhere. If TikTok disappeared tomorrow, these trends would simply accelerate on Instagram Reels, YouTube Shorts, and whatever comes next.

So plan for the trends, not just the platform.

How This Connects to Your Content Workflow

All of this sounds great on paper. But executing on three trend signals, monitoring save rates, mapping curiosity detours, and empowering community co-creation — while also, you know, running your actual business — requires a workflow that doesn't make you want to throw your laptop out the window.

This is where having your content pipeline organized actually matters. When you can schedule, preview, approve, and analyze all your social content in one place, you free up the mental bandwidth to think about what to post rather than how to post it.

If your current setup involves three browser tabs, a shared Google Sheet, and a Slack thread titled "URGENT — client approval needed," you might want to explore how ZoomSphere can streamline that chaos. Especially the bulk approval workflows — because sending 47 individual approval emails in 2026 is not the vibe.

Final Thought

The TikTok Next 2026 report isn't really about TikTok. It's about what happens when an entire generation of consumers starts demanding more from the content they consume — and the brands that create it.

Passive scrolling is fading. Intentional engagement is rising. And the brands that treat social media like a one-way broadcast channel are going to feel increasingly invisible.

The good news? If you actually have something real to say — something honest, something useful, something that respects your audience's time — 2026 might be your best year yet.

Now stop reading and go check your save rate.

%20(1).webp)

Performance reporting has become the marketing version of unpaid overtime… except it’s not overtime, because it never ends. And I know that sounds dramatic, but when 88% of marketers say reporting devours most of their week, it stops feeling like work and starts feeling like a yearly subscription to spreadsheet misery.

What makes it slightly absurd (and, honestly, a bit insulting) is this: 26% of clients don’t even open the analytics, and 24% skim your thoughtful recommendations only to go with their gut anyway. You spend hours polishing charts, they spend eleven seconds wondering why a bar is blue.

Perhaps this is why so many teams whisper the same confession: “If one more person asks for a ‘quick update,’ I might just… no.”

This article is for marketers who are tired, brilliant, over-briefed, under-slept… and still expected to squeeze meaning out of numbers that sometimes refuse to behave.

If that’s you, good. You’re the person this was written for.

{{form-component}}

What Is Performance Reporting (And Why Has It Become a Full-Time Job You Didn’t Apply For?)

You know what’s odd? The deeper you get into performance reporting, the more it starts behaving like a part-time role you somehow didn’t negotiate salary for. And yes, that’s a sharp way to start, but tell me it’s false. Every marketing performance report feels like a new unpaid internship you never asked for. Except the intern is you.

At its core, performance reporting is the act of turning platform data into something a client can read in under eight minutes without reaching for pain relief. That’s it. Not complicated, not mystical, not a sacred ritual guarded by senior analysts. Just… clarity.

The Reason Clients Hate Performance Reports

Most reports weren’t created to guide decisions. They were created to prove activity. Hours logged. Content shipped. Busywork documented.

But clients aren’t grading you for effort. They’re asking for:

clarity, so they know whether the numbers should worry them;

context, so they’re not left Googling acronyms;

and confidence, so they can move money toward what’s actually working.

Everything else is noise. And yes, it’s slightly uncomfortable to say, but it’s the one truth that separates a report that gets skimmed from one that actually shapes strategy.

What Clients Actually Look At First (and What They Ignore Completely)

Look,clients open your beautifully structured report, skim one section, jump straight to whatever confirms their fear or relief, and then jump to your recommendation. The rest sits there (untouched) like leftovers of a meeting nobody remembers scheduling. And this is consistent across industries when you examine what clients want to see in marketing report data: speed, clarity, and a clear sense of whether anything requires action.

The “8-Minute Attention Window”

There’s a reason clients move fast: the average decision-maker spends 6–8 minutes with a performance report before forming an opinion.

A judgment call, typically made under pressure. Not a full reading. Not a thorough breakdown of every chart.

Eight minutes is not enough time to decode platform data scattered across screenshots and graphs. But it is enough time for them to decide whether you understand their priorities — whether you “get their business” in a way that saves them mental effort.

This is why long-winded reports fail. Not due to bad data, but due to time-starved leaders who must decide quickly and defend their decisions later.

The First Thing They Search For

There’s always one section they rush to:

What changed, and why it matters.

In clearer terms, they’re looking for four simple lines:

- What improved

- What dipped

- Whether they should worry

- And what you advise next

If you don’t provide those explanations upfront, they’ll hunt for them… and usually give up halfway.

ZoomSphere’s AI Copywriter actually saves marketers from rewriting the same explanation every month. You feed it raw notes, and it produces clean, human-sounding context in your brand voice. No template fatigue. No late-night rewriting of the same monthly intro for the twelfth time. And, importantly, no misalignment between what you meant and what the client thinks you meant.

The Things They Pretend to Read (But Don’t)

Let’s be brutally honest for a second.

Here’s the graveyard of report elements clients scroll past with the enthusiasm of someone reviewing a tax form:

- Charts requiring zooming — if they can’t read it in one glance, it loses relevance.

- Platform-by-platform dumps without context — raw counts mean nothing without comparison or stakes.

- Tables without reference points — a number without a timeline is just a number, not insight.

The busiest clients skip these because they slow the reading flow. It’s not personal. It’s cognitive load.

The One Thing They Always Read

Your recommendation.

Every single time.

The “If this were my money…” line is the one section that earns undivided attention.

It signals clarity, confidence, and strategic ownership.

This is the real north star of any marketing performance report. Everything else exists to support it, not the other way around.

{{cta-component}}

The Metrics That Matter

Most of the numbers marketers still obsess over have the nutritional value of cardboard. They fill space, they look “professional,” and they create a false sense of completeness. But clients care about the handful of signals that tell them whether their money is doing something real… or just pacing in circles.

Now, this is the part where you stop fighting your reports and finally align them with how decisions are actually made.

Awareness Goals: The Only Numbers That Signal Brand Lift

Awareness metrics are not glamorous, but they’re the first place any informed leader glances when judging momentum. And despite their simplicity, they consistently outperform “creative-but-pointless” metrics in strategic value.

Reach across platforms

If your content isn’t getting in front of enough people, nothing downstream matters.

Not a dramatic opinion; it’s a structural truth across all paid and organic systems.

Impressions

A view is not a promise, but it is a footprint. It shows distribution power. And clients track this even when they say they don’t.

Frequency sanity check

Too low? No one remembers you. Too high? People feel stalked. There’s a middle zone that keeps brands memorable without causing fatigue — and clients expect you to know where that line is for their category.

Cost per 1K

A simple efficiency indicator. Not a full diagnostic, but a reliable early warning signal when something is off.

When someone claims awareness reporting is “fluffy,” it usually means their awareness layer is missing context.

Engagement Goals: The Numbers That Reveal Content Health

Engagement tells a different story; the one you actually build campaigns around.

Saves

This is the closest thing social metrics have to proof of usefulness. Saves mean relevance. Saves forecast loyalty. Saves matter far more than most marketers admit.

Shares

The only true amplification signal. No algorithmic trick outperforms a human deciding content is worth passing along.

Comments (sentiment included)

Volume without sentiment is misleading. Sentiment without volume is incomplete. You need both to understand whether the content is resonating or irritating.

Watch time / Completion rate

These two metrics expose content quality instantly. If people leave at the start, the idea missed. If they stay, the idea holds.

These metrics form the “content health core.” Everything else you could add is secondary or decorative.

Conversion Goals: When “Good Enough” Isn’t Good Enough

This is the zone where your report stops being “interesting” and becomes financially accountable.

CPA

The baseline sanity check for acquisition systems.

ROAS

The number that gets screenshotted and dropped into executive chats more than any other.

Revenue attribution

Reliable when your tracking is strong. Dangerous when it isn’t. Leaders know the difference.

Assisted conversions

The misunderstood sibling of last-click attribution. Clients rarely ask for it, but when you add it, they finally understand the pipeline they’re actually funding.

Why You Must Stop Sending Vanity Metrics

If you keep flooding clients with numbers that don’t influence decisions, they eventually treat all your numbers as noise. And noise, in their mind, signals something worse:

“My agency might be hiding something.”

Vanity metrics don’t protect you.

They corrode trust.

And they distract from the metrics that actually justify budget continuation.

How to Build a Performance Report Clients Actually Read

Most performance reports are unread not because clients are careless, but because the reports feel like unpaid homework. If you’ve ever prepared a 22-slide deck only for the client to skip straight to your recommendation, you already know the painful reality.

So, if you’ve ever felt confused about how to build a performance report people actually read, here’s the unfiltered version… the version that respects time, intelligence, and attention spans that shrink every quarter.

%20(1).webp)

Start With the Story, Not the Spreadsheet

If you lead with numbers, you lose them.

If you lead with meaning, they follow.

Clients move fast; they scan for orientation before detail. That means the first thing they consume shouldn’t be a data cluster — it should be the thread that holds the data together. A short narrative explaining why the month behaved the way it did. A direct, human summary. Not over-engineered. Just honest.

Numbers should support the reasoning, not reverse-drive the relationship. When the story is clear, the data stops feeling like static.

The “One-Page Truth Sheet” Framework

This is the part where marketers breathe again. A one-page format forces discipline and produces clarity clients can trust. The One-Page Truth Sheet has six elements… all of them essential, none of them bloated:

1. The 30-second summary

This is the context-setting paragraph ZoomSphere’s AI Copywriter drafts for you in seconds. You feed it facts; it gives you a clean explanation in your brand voice.

2. What worked and why

Not a list of “good numbers.” The cause behind the improvement.

3. What didn’t and why

Clients don’t fear dips — they fear unclear dips.

4. The pivotal metric from the dashboard

One number that shaped the month. Not ten.

5. Your recommendation

This earns more attention than any chart.

6. A tiny “watch this” section

A fast, future-facing note that signals proactive thinking.

No filler. No 11-page annex.

The Monthly Performance Report Template That Doesn’t Make You Cry

Here’s a truth most marketers eventually accept: the perfect monthly performance report template is brutal in its simplicity.

One page.

Three charts that actually matter.

One insight that changes the next move.

One recommendation that removes guesswork.

Zero platform screenshots, because screenshots are visual clutter and rarely survive client scrutiny.

When clients say they want transparency, they rarely mean more graphs. They mean fewer, clearer, sharper signals. And a one-page template forces exactly that.

What Clients Actually Want to See (The Parts They Never Say Out Loud)

See, clients don’t want everything. They want clarity. And, weirdly, they often want that clarity in the most stripped-down form possible. The problem is they rarely have the phrasing (or courage) to say this outright. So agencies keep piling on more graphs, more numbers, more dashboards, thinking “volume” means “value.”

But if you study what clients want to see in marketing report signals, the list is embarrassingly short. Almost insultingly short. And yet, most reports never hit it.

Honesty (but with dignity)

Clients don’t fire agencies for weak results.

They fire agencies when the results are unclear.

If the report leaves them confused, they assume you’re confused. And if you’re confused, they assume their money is wandering through the month without supervision. Bad months don’t damage trust; murky months do.

This is why honesty matters more than spin. Not the brutal, chaotic sort of honesty that makes everyone tense. The dignified kind. The version that says:

- This worked.

- This didn’t.

- We know why.

- Here’s how we’re fixing it.

Clarity signals competence. Competence signals safety. And safety (whether anyone says it) is the real product clients are buying from you.

A Clean Narrative That Ties Directly to Revenue

Every client, even the ones who insist they “care about brand,” still filters your work through one lens:

Does this improve revenue conditions?

That means even “soft metrics” must earn their place. If you talk about impressions, explain whether they brought down acquisition costs. If you mention engagement, tie it to improved audience quality. If you mention saves or shares, explain how they forecast retention.

This is the number one gap in most reports. The data is fine. The story is missing the revenue spine.

And Luke Matthews puts it perfectly. Luke (a marketer who ran an agency for five years) eventually abandoned detailed reporting altogether. His words are the verbal slap most marketers secretly need:

%20(2)%20(1).webp)

Luke said the quiet part out loud: clients want revenue-anchored truth, not ornamental analytics.

The “Explain Like They’re on a Moving Train” Rule

This rule will save your reporting life.

You must assume your client is reading the report:

- Between two meetings

- On mobile

- While someone interrupts them with “Quick question?”

If your report can’t survive this environment, it won’t survive at all.

This is why complexity must die. It’s not because clients are intellectually incapable. It’s because their attention is fractured permanently. If a report requires stillness and silence to understand, it’s already lost.

A performance report should be readable with the cognitive load of scanning a receipt. Short sentences. Clean reasoning. Fast context. No internal puzzles.

Reports That Don’t Trigger Panic

Panic doesn’t come from bad data.

Panic comes from unexplained data.

You stop the spiral by covering three things, in this exact order:

1. What happened

The factual state of the month.

2. Why it happened

The reasoning behind the shifts.

3. How you’re adjusting

The confident next step.

This trifecta is the psychological bedrock of trust. Remove one and the report becomes noise. Include all three and the client feels guided, not overwhelmed.

And yes, this sounds simple. Almost suspiciously simple. But the truth is, this is the only structure clients actually absorb.

Reporting Workflows Are Destroying Marketing Teams

If marketers ever unionize, the first item on the protest banner won’t be “better tools” or “more budget.” It’ll be:

“Please stop making us produce reports nobody reads.”

Because whether anyone admits it or not, the reporting workflow for marketing teams has quietly become the part of the job that drains the most dopamine in the shortest amount of time.

The Reporting Hangover

Here’s the stat that explains your exhaustion:

73% of marketers say they get weekly ad-hoc reporting requests – yes, weekly.

Which is why your work week now resembles something between a scavenger hunt and a punishment ritual:

Three dashboards.

Seven PDFs.

Four platforms with slightly different numbers that refuse to match.

And a Slack message at 8:17 PM that simply says:

“Numbers???”

If reporting had calories, you would burn enough in a week to qualify as endurance training.

Why Your Reporting Workflow Is Burning You Alive

Let’s call out the villains directly… the things turning smart marketers into tired data janitors:

Fragmented tools

Every platform demands its own log-in, its own export, its own interpretation. You spend more time switching than analyzing.

Duplicate exports

Download the CSV. Clean the CSV. Realize the client switched KPIs. Download it again. Repeat until sanity slips.

Manual screenshotting

The moment that proves reporting workflows were designed by someone who hated efficiency. Nothing kills momentum like cropping graphs you already saw 12 times.

Five stakeholders, each with a “quick change”

A “quick change” is never quick. It spawns three more questions, delays approval, and reshapes the entire deck.

And none of this is strategic. None of this improves performance. It’s maintenance disguised as necessity.

The (Rather Funny) Psychological Toll

There’s a moment every marketer knows; the moment when you stare at a half-built report, sigh in a way your therapist would study closely, and reach for snacks, caffeine, or a whispered plea to whichever higher power handles digital fatigue.

If your reporting workflow requires comfort food, coffee, and a small prayer just to complete one cycle… the workflow is broken.

Not you.

Not your team.

The workflow.

And that’s the point most marketers forget. Reporting shouldn’t feel like a survival test. It should feel like a tool for clarity, alignment, and decision-making… not a monthly endurance trial.

.webp)

Agency Performance Reporting Best Practices (That Don’t Make You Quit Your Job)

There’s a reason most agencies quietly resent reporting: it asks you to prove your competence over and over, even when the work itself is strong. But when you peel back the layers of agency performance reporting best practices, a pattern emerges: the most effective agencies aren’t the ones building gigantic reports. They’re the ones building reports clients can actually use.

The “Stop Over-Proving Yourself” Principle

You don’t need to build a dissertation every month. And clients don’t want one.

What they want is consistency, direction, and a signal that you’re steering the ship with intention — not drowning them in data out of insecurity.

Over-proving is an agency survival instinct. You had one bad month once, or a client questioned a decision, and suddenly every report becomes a 19-page apology disguised as “thoroughness.”

Truth is: